Too many upside down photos? Take 20 minutes and use AI to flip them.

Its great when you’ve spent 2 hours scanning photos only to suddenly notice you’ve scanned some random number of them upside down. Rather than painfully go through each one and flip them, you might feel like you’d rather just jump in front of a truck. Well, when a customer came to us with a similar problem, I immediately wondered if machine learning might be able to help. And guess what? It did. The end.

If you’re interested to know how I did it in 20 minutes, then please continue to read.

Sometimes machine learning can be more of an art than a science, even though it is technically a science. Maybe science is art? A boring topic to discuss later, what is more interesting is the reason that I brought it up here.

Whenever I get asked “can we use machine learning to solve that?” my answer is always “I don’t know — let’s try it and see”.

Experimentation is critical to using machine learning successfully. If you spent 6 months building a model for a specific use case, you might find out too late that it doesn’t really work well. Instead, I like to find ways to quickly test something to see if there is some viability to using machine learning to solve a problem.

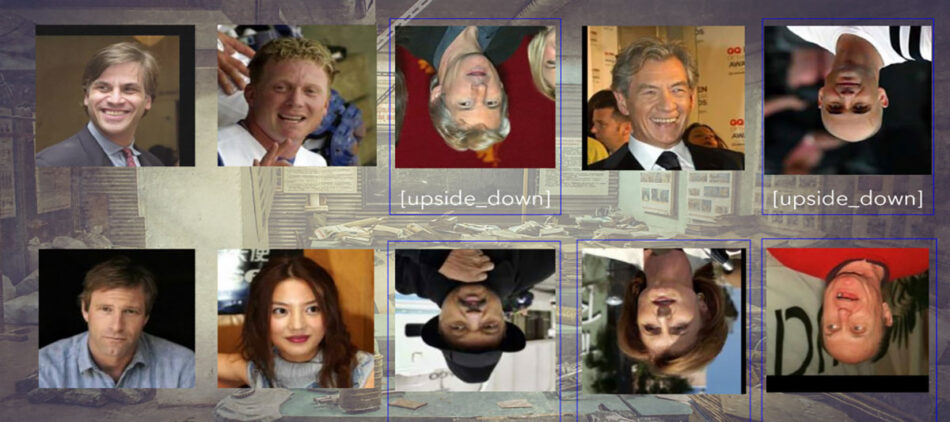

So, back to up side down photos. This particular use case was around photos of people that were sometimes upside down. Most face recognition tools out there struggle when a face is upside down, so we’d need to at least flip it to right side up before submitting it to a face recognition capability.

So I thought, let’s try and train an image classifier to see if we can detect whether a photo is upside down or not. Mind you, this is after looking at the possibility of inspecting the EXIF metadata to see if there was information in there about the rotation; there wasn’t.

The first thing I did was hunt for training data. I went to Google Images and searched for ‘faces’. I downloaded as many as I could (around 346) and created a set of right side up faces. Then, I tried a Google Images search for ‘upside down faces’ — that was a mistake for many, many reasons. The most relevant reason is because what I want to do is train the classifier to detect the difference between two things that might otherwise be the same, so I opted to flip all the photos that were right side up, so that I had an upside down counterpart. The only way I knew how to do that at scale was to use Apple’s Automator.

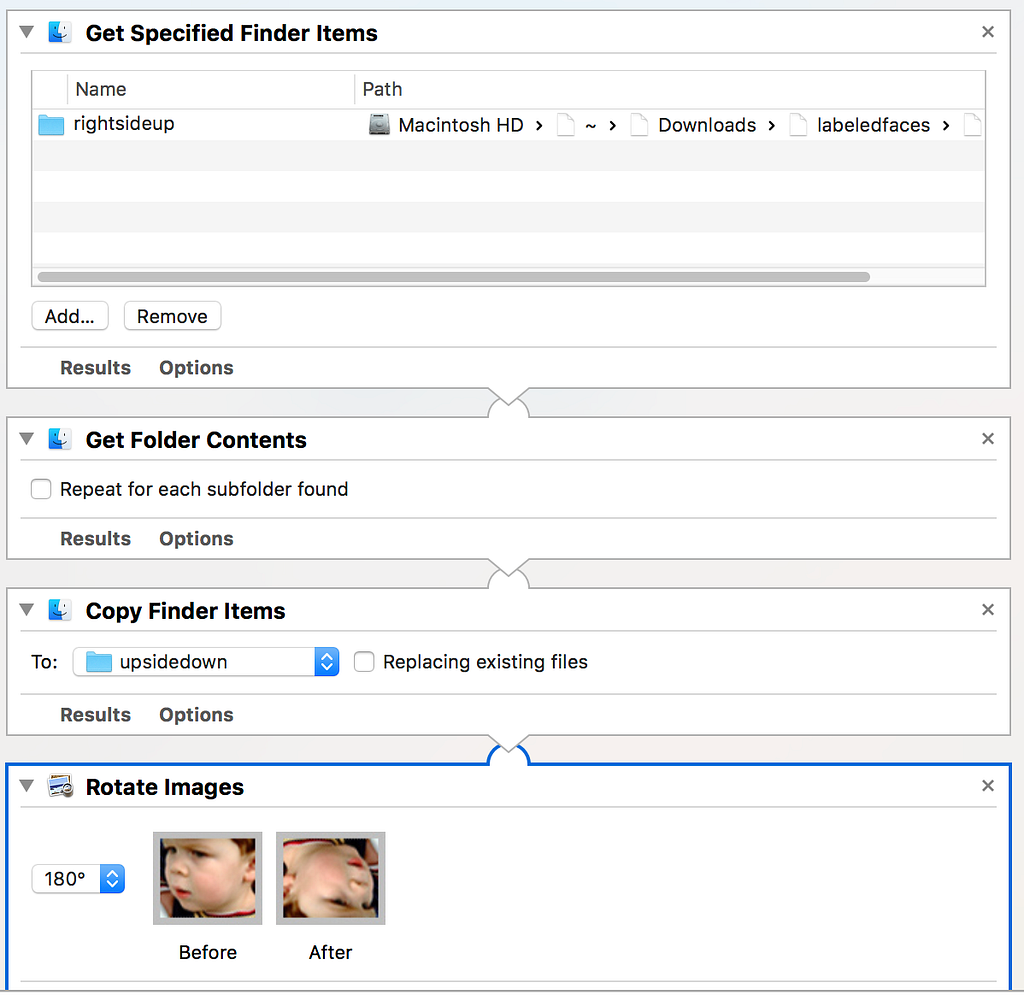

Setup your workflow with Get Specified Finder Items pointing to the folder of faces, linked to Get Folder Contents, linked to Copy Finder Items and create a new folder, followed by Rotate Images set to 180 degrees.

I ran that workflow and ended up with an equal amount of upside down faces. Then, I headed over to one of our open source projects imgclass, downloaded the command line tool, installed Classificationbox, and fired off the training job.

“Dammit” I exclaimed as I looked at the resulting accuracy that imgclass outputs. It was only 34%. Not good enough. I needed more training data. So I thought, where can I get a whole lot more faces? That’s right, labeled faces in the wild!

Labeled faces in the wild has 13,234 unique pictures of faces. I quickly downloaded that, ran a bash command to remove all the photos from their nested directories and flatten it into a single folder, then ran the automator workflow again on this folder to create the upside down class, and started imgclass on the new dataset.

Training Classificationbox on this many photos on my Mac took about 1 hour 45 minutes, so technically, this whole process took longer than 20 minutes, but I really only spent about 20 minutes doing work myself.

Once it was complete, I beamed with pride at the new accuracy number imgclass was reporting; 99.97%

JAM!

Now I have an effective model at detecting when a photo of a face is upside down that I can use in a workflow to correct an endless number of images. If you’re really interested in using this, I’ll save you the 20 minutes + ~2hours of processing time and delight you with a link to the model here.

Load that state file into Classificationbox and you’re ready to start detecting upside down faces.

YOU’RE WELCOME.

Too many upside down photos? Take 20 minutes and use AI to flip them. was originally published in Towards Data Science on Medium, where people are continuing the conversation by highlighting and responding to this story.