Build a machine learning image classifier from photos on your hard drive very quickly

The imgclass tool lets you take a folder full of images, and teach a classifier that you can use to automatically classify future images.

It works by creating a model and posting 80% of your example images toClassificationbox, which then learns what various classes of images look like, and what their shared characteristics are. The remaining images are then used to test the model, to see how good it is.

Developers can then use the Classificationbox API to predict the class of images it has never seen before.

The project is open-source and written in Go, and this article will explain how to use it.

Install it

Install the imgclass tool:

go get github.com/machinebox/toys/imgclass

If you don’t have Go installed, why not?

Verify it installed correctly:

$ which imgclass /path/to/somewhere/bin/imgclass

Prepare the teaching data

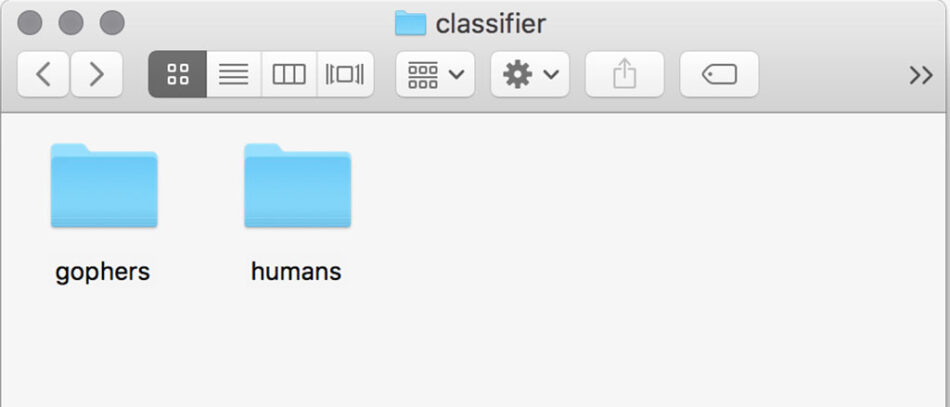

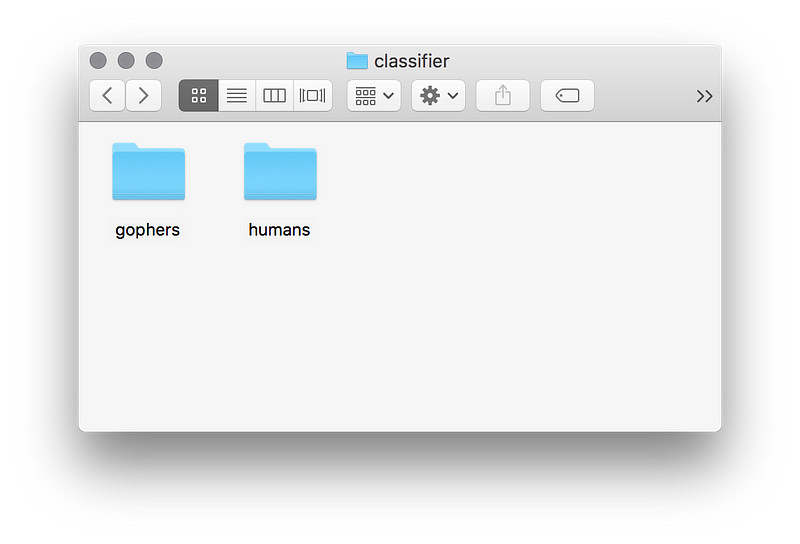

Create a folder somewhere and inside it, create a subfolder for each class.

Inside each folder, add as many examples of the images you want to teach as you can. Usually the more images you provide the better your model will be.

If you’d like to play with this without having to collect lots of image data, you can use the classic cats vs dogs example with this sample dataset. The remainder of this article uses this dataset.

Spin up Classificationbox

Assuming you have Docker installed, you can spin up Classificationbox with this single line of code:

docker run -p 8080:8080 -e "MB_KEY=$MB_KEY" machinebox/classificationbox

- If you need an MB_KEY, you can get one for free from the Machine Box website

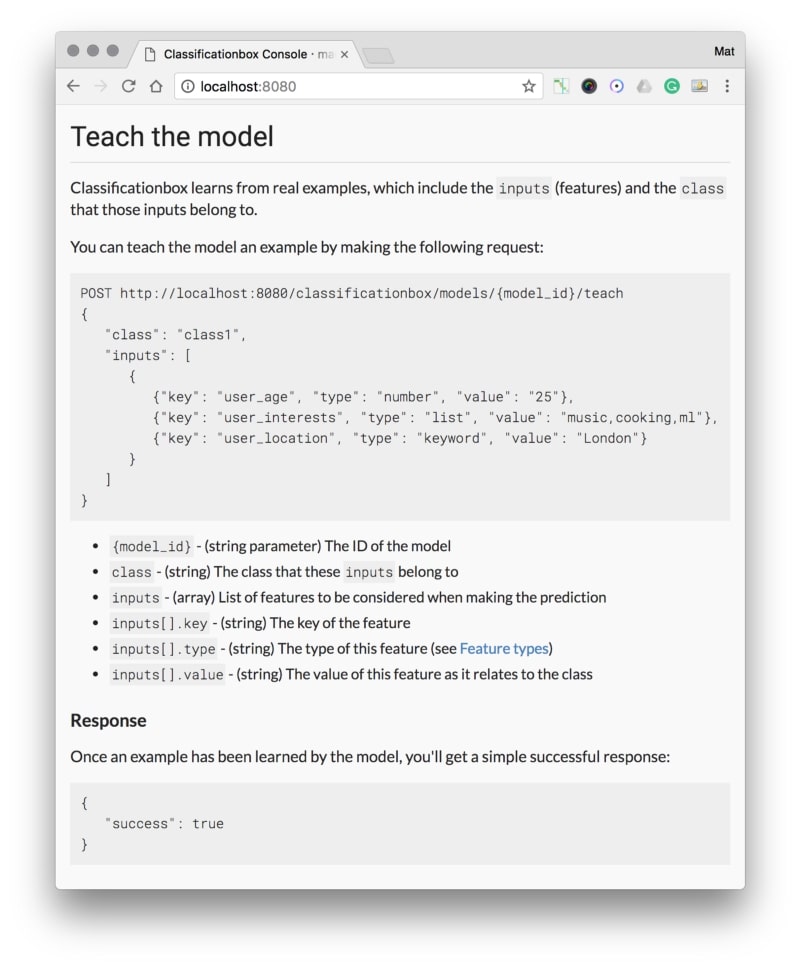

Once it’s running, go to https://localhost:8080 and you should see the API docs:

Teach and validate

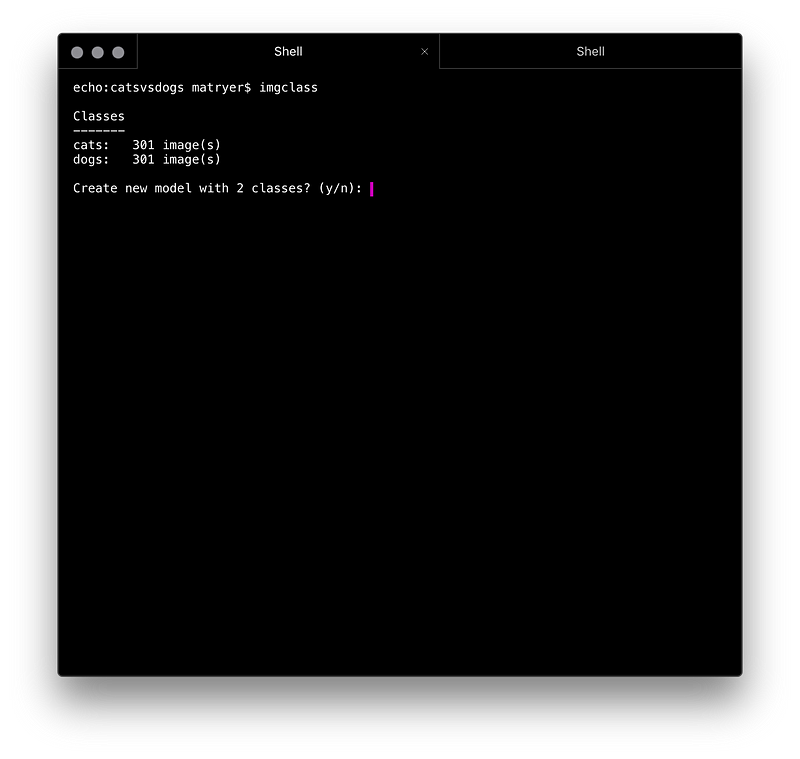

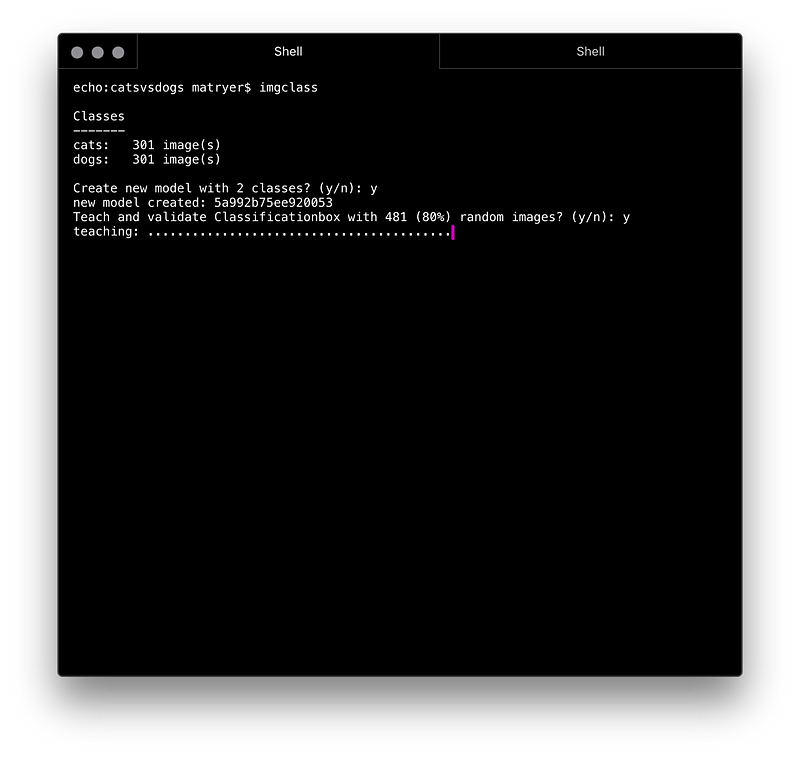

Navigate to the folder where your class folders are, and execute the imgclasstool:

Classifiers work better with a balanced number of examples, so aim to have the same number of examples in each class if you can.

Once the model is created, you’ll be prompted asking if you want to teach a selection (80%) of the images. Hitting y and pressing return will start the process:

imgclass tool will give each image to Classificationbox for it to learnTeaching essentially involves opening each image, converting it into a base64 string, and submitting it to the /classificationbox/teach API endpoint in a request that resembles this:

POST /classificationbox/teach

{

"inputs": [

{

"type": "image_base64",

"key": "image",

"value": "...base64 data..."

]

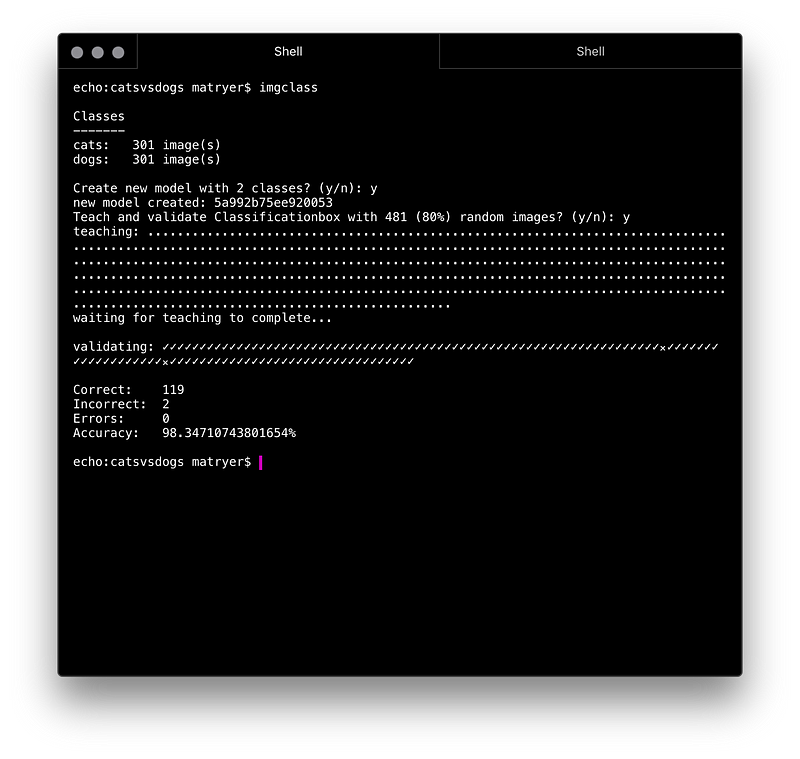

}Once the teaching is complete, the validation process will begin.

Validation essentially take the remaining images (20%) and asks Classificationbox to predict which class they belong to. If it gets it right, we count it as correct, otherwise as incorrect.

The results are shown in the terminal:

That’s it

Now, Classificationbox is an image classifier that you can use in production to automatically classify images.

Unlike other forms of machine learning, you don’t have to be finished at this point. You are free to continue adding teaching examples to improve the model over time. This is useful if you have any kind of human interaction or correction of false positives or false negatives.